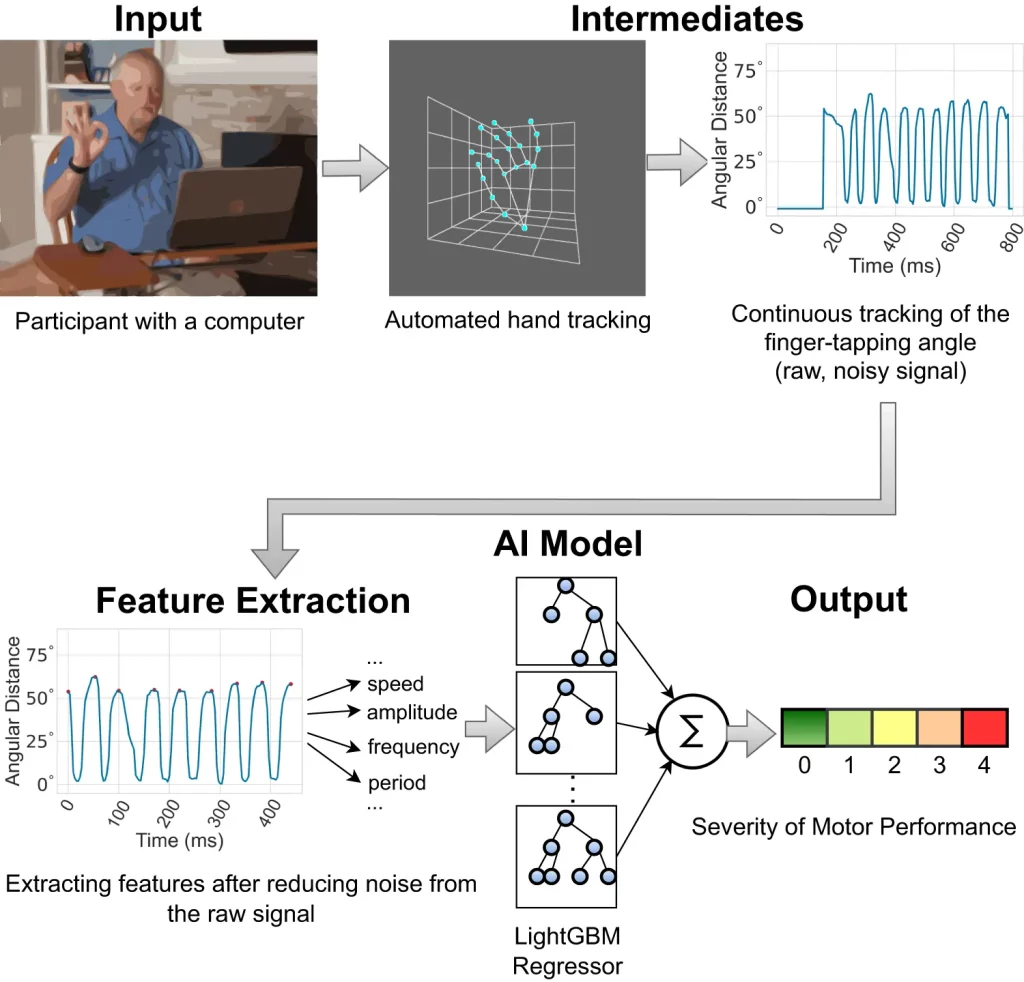

A team of researchers has recently developed an Artificial Intelligence (AI) based model for detecting the severity of the disease’s progression and its symptoms in individuals diagnosed with Parkinson’s disease. This model, the LightGBM regressor, integrated with MediaPipe, a pose-estimation model, analyzes motor movements from finger-tapping videos sent by patients. This model has been shown to be more effective than non-expert practitioners in assessing the severity of the disease, but expert neurologists outperform it. However, the accuracy of the model on several parameters is nearly as high as that of the experts.

Parkinson’s disease (PD) affects millions of people all around the world and, unfortunately, does not have a fixed cure at the time. Although it is possible to diagnose the disease early on and manage the system through regular clinical visits and checkups, Regrettably, access to such services is limited and not available to everyone in close proximity, and even if it is accessible, it can be challenging for older individuals with movement impairments. It is important to keep track of the progression of the disease before it’s too late, highlighting the importance of checking the severity of the disease from time to time.

Keeping track of the severity of the disease

Conventionally, a standardized task of ‘finger-tapping’ given to patients is assessed by expert neurologists to assess bradykinesia (slowing of movement), a standard symptom of PD. The task is simple: the patient has to tap the index finger on the thumb multiple times, as fast as possible, and taps per unit time are counted. The severity is rated between 0 and 4, with 0 being the lowest and 4 being the highest. The measured value is then compared with the Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS-UPDRS). In areas with inaccessibility to expert neurologists and care facilities, non-experts are consulted to measure the severity. This alternative can be a temporary solution, as the experience of experts far surpasses that of non-experts.

Artificial Intelligence comes into play

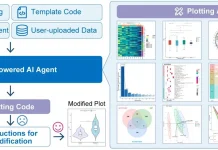

Artificial Intelligence models have been used to attempt to counter the problem of inaccessibility by providing the option of detecting severity from the comfort of patient’s homes. Here, the task performed by the patients is viewed through a webcam and analyzed accordingly by a LightGBM-regressor model; MediaPipe was used as the pose-estimation model. In order to compare the performance of this model with expert diagnosis, three neurological experts and two non-experts were made to analyze the same set of data as analyzed by the Artificial Intelligence model. All the doctors involved are certified raters, but the experts actively deal with PD patients and have more than 10 years of experience in the field.

Image Source: https://doi.org/10.1038/s41746-023-00905-9

Exploring how the model works

The model is trained to detect clinically relevant features in videos that were collected at a rate of 30 frames per second. One of the most significant features used is the interquartile range (IQR) of the finger-tapping speed. When the index finger goes towards the thumb to touch it, it decelerates, and conversely, when the index finger goes back up, away from the thumb, it accelerates. By providing coordinates for the finger positions at various time intervals, MediaPipe can trace the movement. Based on the range obtained due to the difference between the maximum and minimum speed, the severity is correlated using statistical methods such as Pearson’s correlation coefficient (PCC). A higher range implies healthy motor functions, and vice versa—this corresponds to symptoms of PD.

Along with IQR, measures of variance and entropy of tapping periods and the standard deviation of finger trapping frequency are all positive correlations with Parkinson’s; they all imply an absence of consistency in the amplitude and frequency of finger tapping. SHapley Additive exPlanations (SHAP) were used as an interpreter for the machine-learning model; it identified important features to be taken into consideration based on the data generated by the model. These features were the same as the standard metrics used by experts for the detection of the severity of PD, further proving the reliability of this model.

Mean absolute error (MAE) was another statistical feature used to measure average prediction error. For the model, the error was 0.58, meaning that it may predict a level 1 severity for a patient with a level 0 severity on several occasions. However, it was known that experts only predicted the severity correctly with an accuracy of 51%, which leaves a very small margin between the model and expert detection rates of error.

Image Source: https://doi.org/10.1038/s41746-023-00905-9

Possible challenges

Conducting assessments remotely means that there are chances of disturbances in the background that can potentially hinder the detection process. Some factors that can cause disruption are loud background noises, blurred frames, poor lighting, and objects in the backdrop. The model outperforms experts in this aspect; while experts have reported difficulties in assessing the motion of hands in low-quality videos, the model has managed to carry out predictions fairly accurately. However, very loud background noises can interfere with MediaPipe hand-movement tracking.

Another issue arises with considering several other factors to diagnose Parkinson’s apart from the finger-tapping test. In a clinical setting, several considerations are taken into account to officially diagnose an individual with PD, such as their health, medications, and factors unrelated to motor functions such as anxiety, decreased sense of smell, digestive issues, or prescribed medications. Therefore, the model can be used to assess patients already diagnosed with PD rather than diagnose them with it.

Tremors are a prevalent symptom in patients with PD and can prove to be an obstacle to the model as well as to experts. They are unpredictable and interfere with the process of studying bradykinesia. In the case of the model, it interferes with tracing finger movements and generates wrong signals, leading to inaccuracies in the results obtained. There is no solution to this problem as of now.

Ethics of using artificial intelligence to perform medical Checkups

With the increasing integration of Artificial Intelligence into almost every aspect of our lives, ethical concerns have risen with regard to incorporating this technology into medicinal practices. The researchers have addressed these issues in their study and have emphasized the need to ensure equitable practices when developing and implementing these models so that there is no risk of algorithmic bias in terms of gender, race, or age. This study showed a slightly worse performance for the dataset for non-white individuals and males; improvements will be made to train the model to recognize and analyze all datasets equally.

Conclusion

Overall, the AI-based model used in this study has proven to be nearly as effective in terms of diagnosis as expert neurologists, has an edge over experts when it comes to remote analysis of the finger-tapping tasks performed by the patients, and provides the additional benefit of being accessible to patients regardless of their respective locations. Once implemented for studying Parkinson’s disease, it has the potential to be modified and extended to several other movement-related disorders as well, like ataxia and Huntington’s disease. It will be a significant stepping stone towards integrating Artificial Intelligence into healthcare from a long-term perspective.

Article Source: Reference Paper

Learn More:

Swasti is a scientific writing intern at CBIRT with a passion for research and development. She is pursuing BTech in Biotechnology from Vellore Institute of Technology, Vellore. Her interests deeply lie in exploring the rapidly growing and integrated sectors of bioinformatics, cancer informatics, and computational biology, with a special emphasis on cancer biology and immunological studies. She aims to introduce and invest the readers of her articles to the exciting developments bioinformatics has to offer in biological research today.