Protein structure prediction and design may be accomplished using protein language models (pLMs). They may not completely comprehend the biophysics of protein structures, though. The researchers from Harvard University provided an analysis of the structure prediction capabilities of the flagship pLM ESM-2. They developed an unsupervised method for comparing coevolutionary statistics to previous linear models and assessing protein language models. The persistent error in predicting protein isoforms as ordered segments served as the impetus for this. This article examines a recent study that delves into the inner workings of ESM-2, a potent pLM for the prediction of protein structures.

Introduction

Proteins are the engines of our cells, and understanding their intricate three-dimensional shapes is critical to solving biological puzzles. Because understanding a protein’s structure is critical to understanding its function in biology, scientists are interested in the difficulty of predicting protein structure from sequence. This used to need extensive testing, but things have changed dramatically in recent years with the introduction of protein language models (pLMs) such as AlphaFold2. The protein structures predicted by these artificial intelligence algorithms are extremely exact, but how do they function? Do they comprehend the foundations of protein folding, or are they only learning pattern recognition? Is ESM-2 scanning a vast protein-shaped library for solutions, or does it understand the language of protein folding?

The researchers wanted to know more, so they evaluated three theories:

- The first theory is that proteins are folded by physics (albeit this is challenged by dependence on surrounding sequences).

- The second hypothesis proposes that a “full fold lookup” is carried out by testing and rejecting predictions using fold memorization.

- The third hypothesis, which is supported by experiments, relies on pairwise relationships conditioned on sequence motifs and isolation.

What, then, is the secret weapon of ESM-2? According to the researchers, this is because of its ability to identify unique arrangements of interacting amino acids found in protein sequences called motifs. To acquire an intricate understanding of the protein’s general composition, the model assesses the separations and arrangements of these patterns. It is like learning a language by figuring out common word patterns and sentence constructions. Rather than learning entire protein folds, ESM-2 interprets the motifs and their combinations that dictate the structure.

Evaluating the Precision of ESM-2

There were several methods used to study protein structure prediction using language models (LMs). Here are the key methods used:

Curation of Isoform Datasets

- Isolated cases of isoforms where ordered domains are disrupted by splicing.

- Researchers predicted isoform and full-length protein structures using AlphaFold2, OmegaFold, and ESMFold.

- The Surface Aggregation Propensity (SAP) score and Root Mean Squared Deviation (RMSD) were computed for structural models.

Dataset for Model Comparison

- The acquired 2245 protein structures were filtered based on sequence length and similarity, as well as coevolution predictions.

- Reduced the number of missing residues in the structures.

Contact Maps from Linear Model

- Contact maps and pairwise coupling weights were obtained from multiple sequence alignments (MSAs) using the inverse covariance method.

- Devised a method for calculating the contact matrix based on coupling weight.

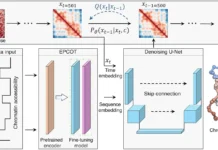

Contact Maps from Language Model (ESM-2)

- Researchers anticipated the resulting logits by mutating each position and computing the categorical Jacobian of the LM.

- From the Jacobian, a contact map was produced by a formula like that of the linear model method.

- The relationship between the ESM-2 Jacobian and linear model couplings has been assessed.

Recovery of Contacts with Flanking Regions

- Researchers retrieved secondary structural elements (SSEs) and predicted structures using ESMFold.

- Estimated contact probabilities were used to calculate contact recovery between SSE pairs.

- Amino acid position adjustments were possible with modified rotary position embeddings (RoPE) without requiring longer inputs.

Unraveling the Enigma of ESM-2

The researchers shed light on how pLMs excel at recognizing specific patterns of interactions within protein sequences and much more. By delving into the intricacies of pLMs, the researchers deciphered their underlying logic, unveiling numerous novel insights along the way. Some of the key findings are:

Prediction of Protein Isoform Structure

- Effective language models (LMs), such as AlphaFold, OmegaFold, and ESMFold, have trouble properly predicting protein isoform structures resulting from splicing events inside structured domains.

- Although the root-mean-squared deviation (RMSD) between these predicted structures and the full-length protein is frequently negligible, the presence of exposed hydrophobic residues indicates that the structures are unfolded and nonfunctional.

Unsupervised Extraction of Coevolutionary Signal from LMs

- A novel “categorical Jacobian” method was developed to extract unsupervised coevolutionary information from the LM ESM-2.

- For contact prediction, this method works comparably to supervised methods, and it also suggests that LMs may catch coevolutionary signals without explicit training.

Contact Prediction Mechanism in LMs

- ESM-2 utilizes flanking residues to predict interactions in LMs with accuracy and favor local sequence patterns over whole protein folds.

- Touch healing progressively reveals surrounding zones, which is supported by the step-function pattern seen in it.

- ESM-2 forecasts interactions between β-strands and α-helices using less background information, as β-strands are shorter and more stable than α-helices

As nothing is perfect despite pLMs benefits, researchers have discovered several downsides in ESM-2. Though ESM-2 has been greatly successful, its essential workings remain unclear. Their image needs to be more transparent, particularly in critical fields such as protein engineering and medication development. Their credibility is brought into question by the secrecy of their internal activities. Future research should improve interpretability and overcome challenges to develop more durable and reliable protein structure prediction systems.

Conclusion

Although protein language models (pLMs) are a great tool for generating and predicting protein structure and function, comprehending the basic biophysics of proteins is still challenging and uncertain. Researchers discovered that pLM-based structure predictors mispredict non-physical structures for protein isoforms. Thus, they looked at the sequence context needed for contact predictions in the pLM ESM-2. To show that ESM-2 preserves co-evolving residue statistics, they used a “categorical Jacobian” calculation. This is analogous to more conventional modeling techniques such as Markov Random Fields and Multivariate Gaussian models.

For pLMs to be employed efficiently, problems must be resolved. If scientists can make sense of their thinking, it may lead to the construction of increasingly sophisticated models capable of comprehending protein language. Imagine AI tools that, in addition to anticipating structures, also explain them, allowing scientists to collaborate with these computational partners on groundbreaking discoveries.

Story source: Reference Paper | The code for categorical Jacobian and contact prediction analyses is available on GitHub

Important Note: bioRxiv releases preprints that have not yet undergone peer review. As a result, it is important to note that these papers should not be considered conclusive evidence, nor should they be used to direct clinical practice or influence health-related behavior. It is also important to understand that the information presented in these papers is not yet considered established or confirmed.

Follow Us!

Learn More:

Anchal is a consulting scientific writing intern at CBIRT with a passion for bioinformatics and its miracles. She is pursuing an MTech in Bioinformatics from Delhi Technological University, Delhi. Through engaging prose, she invites readers to explore the captivating world of bioinformatics, showcasing its groundbreaking contributions to understanding the mysteries of life. Besides science, she enjoys reading and painting.