Scientists from Finland have studied and explored two transformers (deep learning models), BERT and XLNet, in the context of predicting 6-month mortality in cardiac patients from over 23,000 heterogeneous electronic health records (EHR). Transformer neural networks in deep learning are methods used for sequential data modeling. Their inbuilt properties come in handy in predicting mortality risk from EHRs.The data were constructed into semi-structured multi-event time series to exploit the temporal information in the heterogeneous EHRs. The authors find that XLNet is better at capturing more positive cases than BERT. Until now, XLNet has not been applied to EHR data to predict mortality risks, and the authors envision that future studies involving EHR data using XLNet, may achieve improved results.

EHRs provide insights into cardiac patients’ mortality risks: the past and the present

Electronic health records, EHRs, are a storehouse of information pertaining to patient care paths and outcomes. Although the vast plethora of real-life data offered by EHRs is an added advantage for improved machine learning methods for healthcare, the heterogeneous, sparse, and often incomplete data pose challenges for the development of deep learning-aided methods. Such data are also often fraught with erroneous information.

Cardiovascular diseases (CVDs) have proved to be the primary cause of death worldwide and pose an impending challenge to global health at large. Cardiovascular diseases are also one of the most prevalent co-morbidities associated with other life-threatening diseases such as Covid-19. Predictive models could be a game-changer by directing healthcare resources toward improving patient outcomes. It is well established that with dietary and lifestyle changes, CVD patients experience an improved quality of life. Hence identifying high-risk patients and patient deterioration can be of paramount importance for treating CVDs.

Machine learning methods have previously been used to address cardiac disease detection, for example, in arrhythmia from electrocardiograms (ECGs), cardiovascular images, and EHR data. These methods implement Convolution Neural Networks (CNNs) or recurrent neural Networks (RNNs) for disease prediction. Introducing the attention mechanisms in the machine learning framework led to the development of many methods reporting improved results. RETAIN is such a method wherein the authors used the attention paradigm coupled with RNNs to predict heart failure from EHR data.

Transformers are state-of-the-art neural networks in the machine learning domain. Originally built for NLP, these transformers combine attention mechanisms and positional encoding for learning bidirectional temporal dependencies, which are indeed crucial in analyzing EHR data for disease prediction.

BERT, bidirectional encoder representations from transformers, has been previously applied for disease prediction. However, XLNet, a transformer model as well, has been shown to surpass BERT in numerous NLP tasks. XLNet has not been applied for the prediction of disease mortality risk before until now. Thus, keeping the heterogeneous electronic health records data in mind, coupled with the advantage of transformers in processing sequential data efficiently, the authors set out to compare BERT and XLNet on over 23000 anonymous cardiovascular diseases patients’ EHR data and reporting XLNet to be the better performer in disease mortality risk prediction.

Transformers in action

The authors compared transformers-based methods BERT and XLNet for predicting 6-month mortality in cardiac patients from their EHR data. The six-month period was chosen to keep in mind the cohort of chronic patients who may benefit from earlier predictions, thereby providing opportunities for clinical interventions for effective care and reducing the risk of death.

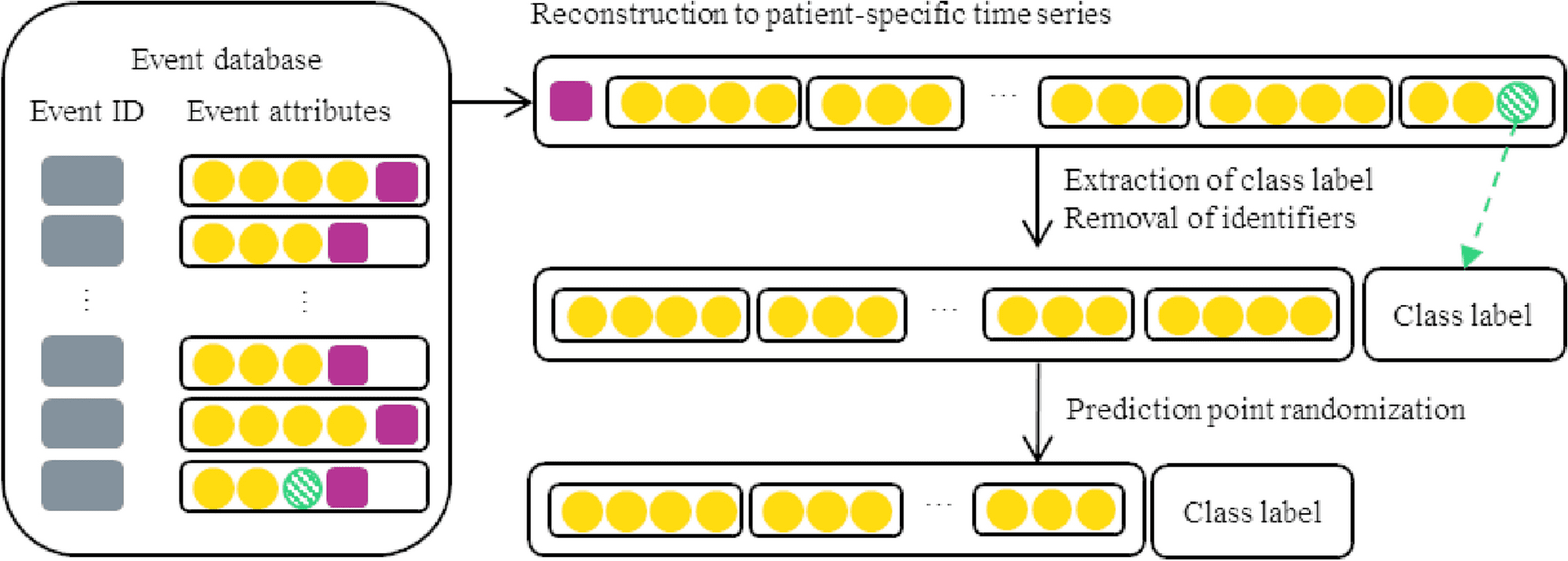

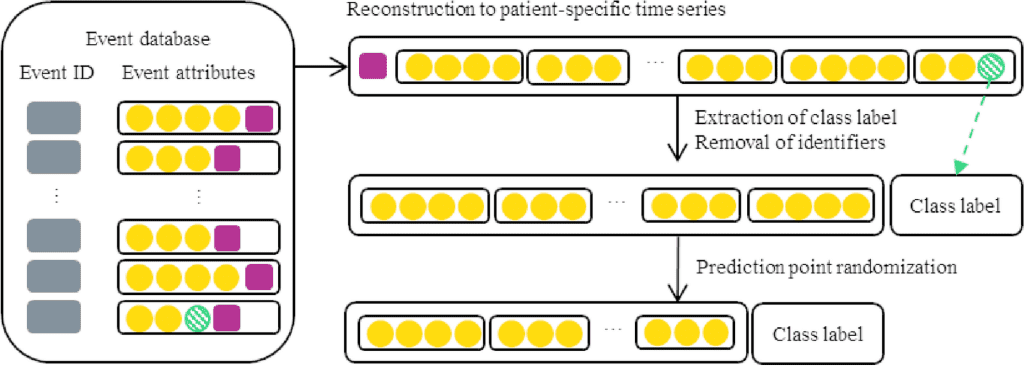

The method implementation using transformers involves formulating each patient’s record events ordered on temporal attributes into a time series. The resulting time series are further processed for appropriate input for the transformer neural networks.

The following figure illustrates a schematic example of the formulation of time series from a patient’s record events.

Image source: https://doi.org/10.1038/s41598-023-30657-1

The input sequences were tokenized by using pre-trained tokenizers for the transformers, followed by model hyperparameter optimization and model evaluation.

Comparison between XLNet and BERT

Both BERT and XLNet were subjected to an assessment of sensitivity to the selection of training instances. XLNet was found to be more sensitive than BERT in detecting positive cases. In order to examine the effect of random initialization, the final model training was repeated five times. BERT is found to have slightly better precision. However, XLNet’s recall improvement over BERT exceeds the drop in XLNet’s precision. Thus, XLNet is better suited for detecting positive cases. Transformers like XLNet, though in principle, are able to handle sequences of any length, the practical implementations of the models are limited by hardware memory resources for training and interpreting the visual outputs.

Conclusion

Transformers have been shown as the right choice of deep learning technique for predicting patient mortality risk from EHR data. Though the EHR data is sparse, transformers BERT and XLNet, with their bidirectional time dependencies learning capabilities, are able to predict CVD patient mortality risk. XLNet was found to provide improved results than BERT and hence a better choice for future studies. Hardware resources limit the transformer-based mortality detection methods for model training. Hence, future improved methods require greater computational power. The entire study was based on an anonymous database of over 23,000 Cardiovascular disease patients, which will direct future model development to be based on anonymous datasets. The ability to now predict the mortality risk of Cardiovascular disease patients over a six-month period is a remarkable achievement for the healthcare sector, both for clinical intervention for death prevention as well as for better management of lifestyle for a better quality of life.

Article Source: Reference Paper

Learn More:

Banhita is a consulting scientific writing intern at CBIRT. She's a mathematician turned bioinformatician. She has gained valuable experience in this field of bioinformatics while working at esteemed institutions like KTH, Sweden, and NCBS, Bangalore. Banhita holds a Master's degree in Mathematics from the prestigious IIT Madras, as well as the University of Western Ontario in Canada. She's is deeply passionate about scientific writing, making her an invaluable asset to any research team.