Cancer is a significant threat worldwide. In a study published in ACS Cancer, authors Bray et al. stated that cancer may overtake cardiovascular disease as the leading cause of premature death worldwide over the course of this century. Cancer biomarkers are the biological molecules produced by the tumor in a person; this helps characterize the disease’s state and prognosis. Prognostic markers give insight into the survival and disease progression. Despite the biomarker testing and targetting, varied treatment responses have been observed across patients. Thus, more accurate biomarkers with information on the clinical context are required to develop the next generation of precision medicine. And Artificial Intelligence is the solution.

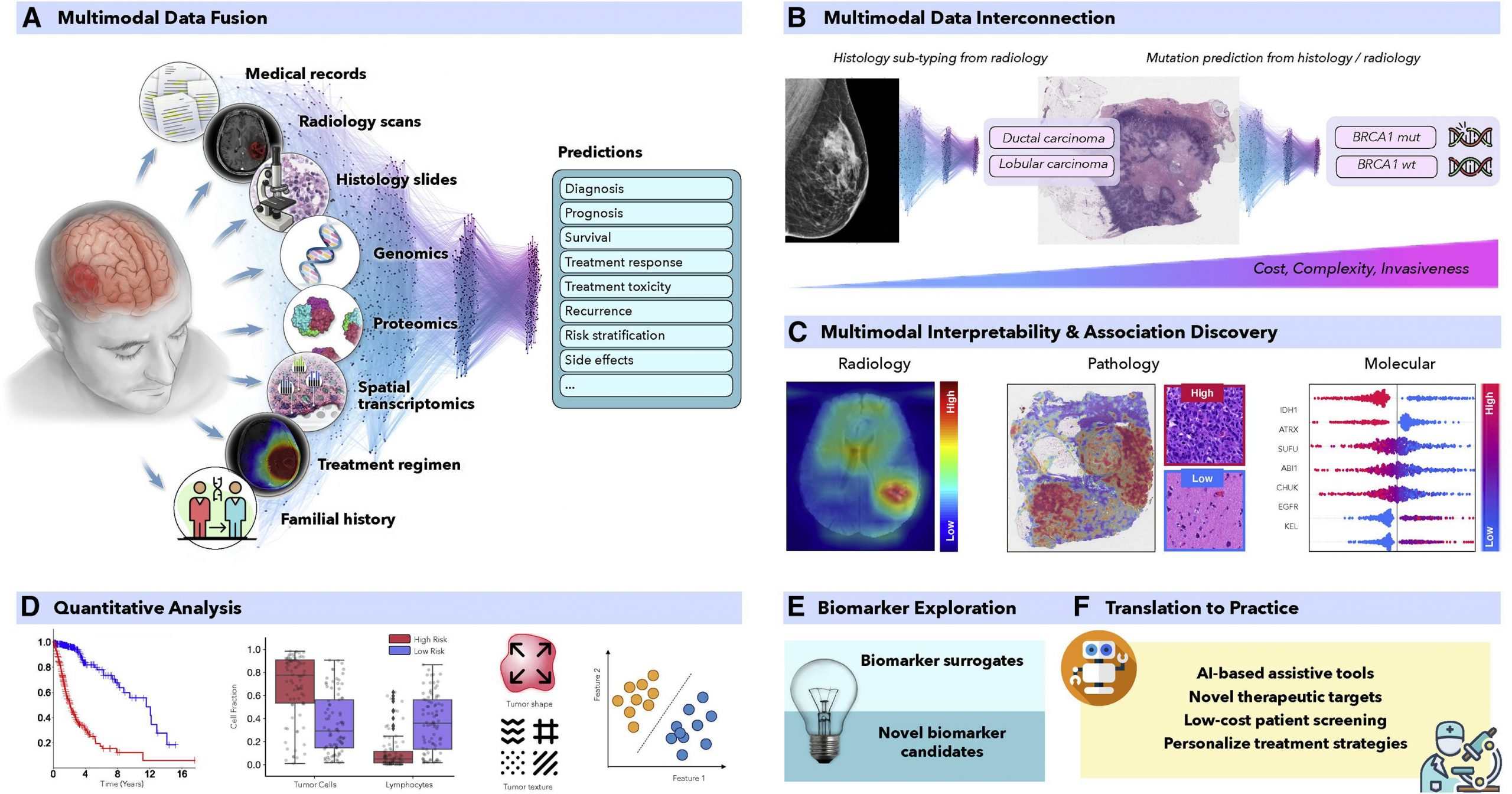

In recent years, AI has proven to be quite effective in a variety of therapeutically relevant tasks, even those that are not simple to human observers. To produce more precise patient predictions, AI models can combine supplementary data and clinical context from many data sources. For the discovery of new biomarkers, the clinical insights revealed by successful models can be further clarified using interpretability methodologies and quantitative analysis. Similar to this, AI models can identify relationships between certain genetic mutations and distinct alterations in cellular morphology or relationships between radiological observations and histologically distinct tumor subtypes or molecular characteristics.

The AI models utilized in oncology can be of three categories,

1) Supervised methods

2) Semi-supervised methods

3) Unsupervised methods

Supervised Methods

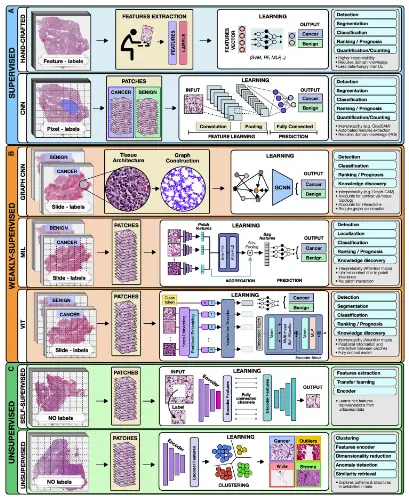

Under supervised models, the input data is outlined to a predefined set of parameters using annotated data such as radiology images to determine patient outcomes. Hand-crafted and representation learning methods are the primary examples of fully supervised methods.

Hand-Crafted Methods: Predefined features such as cell size and shape are considered the input. The model is then trained using machine learning methods such as the random forest (RF), support vector machine (SVM), or multilayer perceptron (MLP). Hand-crafted methods, due to their simplicity, sufficiency, and ability to learn from smaller datasets with simpler features, hand-crafted methods make them the most widely popular.

Representation learning methods: Deep learning (DL) is a representation learning model wherein rich feature representations are comprehended from raw data. The Convolutional neural network (CNN) is the most common DL model used for image analysis. For example, in a given tumor image, the CNN model divides it into slides and labels it as ‘cancer’ or ‘no cancer,’ thereby manually annotating a tumor region. But CNN falls short in predicting patient survival and treatment outcomes and is often criticized for its lack of proper interpretation. Despite the flaws, CNN’s outstanding performance leads to its wide applications.

Semi-supervised Methods

This comes under the category of supervised learning. For example, a weakly supervised model allows the model to train on patient data such as survival and diagnosis, limiting the need for manual annotations. Graph convolutional networks (GCNs), multiple-instance learning (MIL), and vision transformers (VITs) are examples of weakly supervised methods.

Image Source – https://doi.org/10.1016/j.ccell.2022.09.012

Graph convolutional networks (GCN): GCN is an approach for semi-supervised learning on graph-structured data. Compared to traditional DL models for digital pathology, wherein one can patch the image into discrete, mutually exclusive sections, GCNs can report a greater context and spatial tissue structure. When performing jobs where the spatial context extends beyond the boundaries of a single patch, GCN can be helpful.

Multiple-Instance Learning (MIL): MIL consists of three modules: i) feature learning or extraction, ii) aggregation, and iii) prediction. The images are mapped out onto data. The learner receives a set of labeled bags filled with numerous instances rather than a set of instances that are all individually labeled. The multi-level embeddings are then aggregators using a common strategy, attention-based pooling, where the networks are connected to learn the importance of each instance. MIL is an ideal deep-learning model for oncology and genomic datasets.

Vision Transformers: The Vision Transformer, or ViT, is a model for image classification where an image is split into patches, and each of them is embedded, the position is added, and the resulting output is fed onto a standard Transformer encoder. The positioning and multiple self-attention to incorporate spatial information increase robustness. Still, on the downside, the requirement of ViT for large quantities of data is a limitation the scientific community is trying to overcome.

Unsupervised Methods

These models try to unravel the information without using any proper labels. Unsupervised methods include self-supervised and fully unsupervised strategies.

Self-Supervised Methods: Rich features are comprehended within the data in order to attain high-quality embeddings. Pathology images of tumors are self-supervised, and the unlabeled unique image features are learned, which can be missed by labeling.

Unsupervised Methods: Exploring structure, resemblance, and common features among data points is possible using unsupervised techniques. One may, for instance, extract features from a huge dataset of various patients and cluster those embeddings to uncover characteristics shared by all patient cohorts by using embeddings from a pre-trained encoder.

For enhanced decision-making, multimodal data fusion aims to extract and combine complementary contextual information from several modalities. For example, looking only into the mutations or histological samples does not provide information on the clinical outcomes. Fusing multimodal data with AI strategies can increase robustness and overall accuracy. Histopathology and radiology, like MRI scans and molecular data, can be interpreted by linking multimodal data with AI to define patient survival and treatment outcomes.

Final Thoughts

From prevention to intervention, Artificial Intelligence has the power to transform oncology. To foster widespread patient screening and preventive care, AI models examine complex and diverse data to find characteristics connected to cancer risks. These AI methods can also aid in discovering diagnostic or prognostic biomarkers from readily available data. Similarly, the models can find non-invasive replacements for current biomarkers to reduce the need for invasive surgical procedures. Before interventions, predictive models can forecast risk factors or unfavorable treatment outcomes to help with patient management. With more amazing applications, AI models might further evaluate data collected from personal wearable technology to look for early indications of treatment resistance.

Rigorous validation and examination of the AI models through clinical investigations and trials promise its advancement in medicine. AI, laboratory models, and human expertise can spur additional development. Although AI models have limitations, these factors should motivate rather than cause fear. It is our responsibility to take advantage of the benefits of AI approaches to hasten the discovery and translation of advancements into clinical practice for the benefit of patients and healthcare professionals in light of the rising cancer incidence rates.

Article Source: Reference Paper

Learn More:

Top Bioinformatics Books ↗

Learn more to get deeper insights into the field of bioinformatics.

Top Free Online Bioinformatics Courses ↗

Freely available courses to learn each and every aspect of bioinformatics.

Latest Bioinformatics Breakthroughs ↗

Stay updated with the latest discoveries in the field of bioinformatics.

Shwetha is a consulting scientific content writing intern at CBIRT. She has completed her Master’s in biotechnology at the Indian Institute of Technology, Hyderabad, with nearly two years of research experience in cellular biology and cell signaling. She is passionate about science communication, cancer biology, and everything that strikes her curiosity!