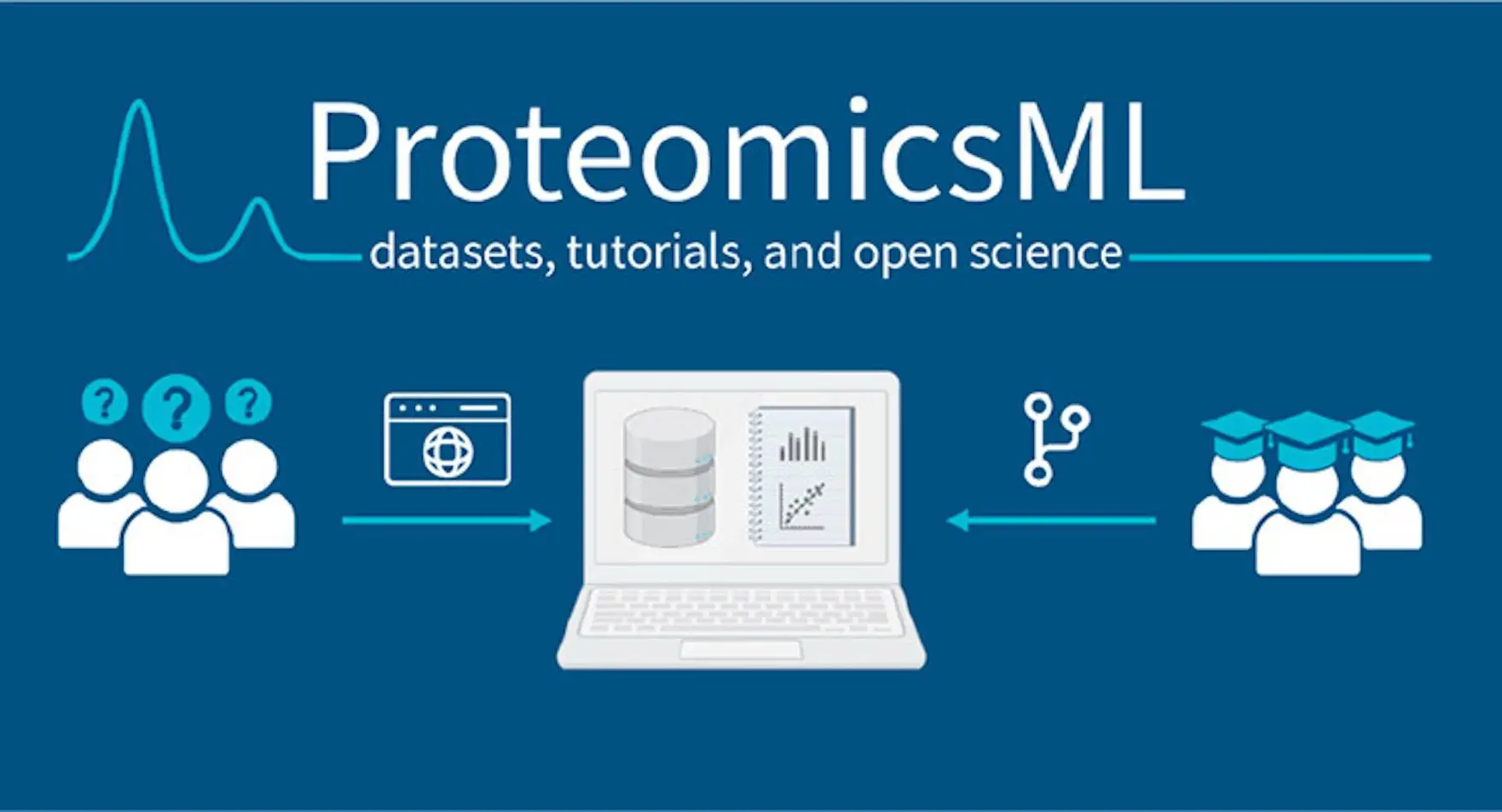

The laborious aspects of acquiring and curating data sets for machine learning, especially in proteomics-based systems, present unique difficulties because there is a significant amount of data reduction from raw data to machine learning-ready data. In spite of the difficulties with accessibility and repeatability, laboratories frequently employ distinctive and intricate data processing pipelines to maximize performance and reproducibility in predictive proteomics. Because of this, researchers from multiple institutes provide ProteomicsML, an online resource that offers courses and data sets based on proteomics, covering the majority of the physicochemical peptide properties that are currently being studied. ProteomicsML is a community-driven platform that provides simple access to sophisticated algorithms and data sets within the discipline. It is an invaluable tool for educators and beginners alike, offering tutorials on how to work with these algorithms.

Introduction

Research on computational forecasts of analyte behavior in mass spectrometry data has been conducted for almost fifty years. Machine learning (ML) approaches were launched in 1998; as computing power and technology advanced, ML-based models soon surpassed human accuracy. Since then, attempts have been undertaken to train models for several aspects of high-throughput proteomics related to physicochemical parameters. While fragmentation spectrum intensities and retention times are frequently researched features, there are several other less well-known qualities as well. Proteomics is showcasing the promise of computational power with the increasing integration of machine learning methodologies into both stand-alone predictive resources and mainstream solutions.

ML models are compared against the Titanic, an ML model that has been modeled more than 54,000 times. Research frequently uses instructional data sets, such as MNIST and IRIS, to teach common machine-learning techniques. To compare their predicting skills, state-of-the-art models can use benchmark data sets such as ImageNet or those on the UCI Machine Learning Repository. Proteomics data sets can be a starting point for machine learning modeling, improving the predictive power of ML models in a manner similar to the usefulness of benchmark data sets.

Predictive models are not widely used in predictive proteomics despite several attempts to investigate their potential. This is due to the following reasons:

- Acquiring data sets appropriate for machine learning poses significant challenges for proteomics applications. A thorough understanding of file formats and postprocessing techniques is necessary for the preparation of raw proteomics data sets. Comparing MS data might be difficult since missing metadata is a common occurrence. The majority of machine learning frameworks for proteomics have specific postprocessing pipelines. Tools designed to make this process easier, such as ppx and MS2AI, are constrained by the complexity of data from liquid chromatography linked to mass spectrometry (LC-MS).

- ProteomeTools is a well-liked tool for training data sets suitable for machine learning; however, it lacks post-publication support and long-term maintenance. These data sets are frequently utilized for training despite the fact that the area is devoid of a formal consensus. Nevertheless, there isn’t a commonly utilized dataset to evaluate the effectiveness of instruments created by various investigators. Individual groups’ reliance on various pre- and postprocessing techniques, such as variations in measurement normalization or model performance metric implementation, exacerbates this problem even further.

The goal of ProteomicsML, a web platform created for the Lorentz Centre Workshop on Proteomics, is to make machine learning techniques easier to use in MS-based proteomics. Through tutorials led by experts, the platform gathers and distributes ready-to-use datasets for machine learning research, attracting new users. ProteomicsML makes use of widely accessible tools and is built with future maintenance simplicity in mind. It gives a quick rundown of the available data sets and explains how more data can be added. A primer on machine learning in proteomics can be found in the first series of tutorials.

Understanding ProteomicsML

A resource for proteomics machine learning researchers is ProteomicsML, which offers starter data sets and instructional materials. It is simple to maintain and open to community contributions because the site’s code base is managed using a GitHub repository. Pull requests, which are evaluated by maintainers in accordance with the website’s criteria, are opened by researchers for additional tutorials or data sets on the repository. The GitHub repository’s tutorials and data sets are licensed under the Creative Commons Attribution 4.0. Bigger data sets are also kept on an FTP site specifically for ProteomicsML using the PRIDE database technology.

ProteomicsML aims to expand the subject by offering comprehensive documentation, tutorials for certain ML techniques, and a contributing guide for sharing data sets. After curation by maintainers, the platform goes through a build test to make sure the contributions are automatically published and available to other researchers.

ProteomicsML provides courses and data sets for replicating LC-MS characteristics like fragmentation intensity and retention time. The platform offers a basic data format for direct entry into machine learning toolkits and arranges data sets according to their category. For various reasons, each data type may contain one or more data sets; each should have appropriate metadata annotations.

ProteomicsML offers comprehensive tutorials for handling, training, downloading, and importing different machine-learning models. Compatibility with ML frameworks is totally dependent on complicated pretreatment processes for several LC-MS data formats. ProteomicsML tutorials can be attribute- or data set-specific, enabling new submissions to concentrate on dealing directly with particular ML models or techniques or a particular facet of data preprocessing.

Data sets with unique pre- and postprocessing techniques are frequently used in conjunction with new modeling methodologies. ProteomicsML enhances reproducibility and makes community benchmarking easier by enabling the uploading of fresh data, a standardized metadata entry, and an instructional guide to the website.

Datasets used in ProteomicsML

ProteomicsML offers data sets in ML-ready format derived from the original raw data, mostly from the PRIDE database. In order to guarantee complete provenance, the platform provides in-depth tutorials on transforming raw data into ML-ready formats. Protein detectability, ion mobility, retention time, and fragmentation intensity are among the data sets available; further data kinds are anticipated as the platform advances alongside the field. The data sets include ion mobility, fragmentation intensity, retention time, and protein detectability, with more data types expected to be added as the platform evolves with the field. Alongside data submissions and lessons, machine learning is revolutionizing proteomics and opening up new opportunities for growth. Researchers can upload data to this standardized platform, which also offers comprehensive lessons on data handling and machine learning techniques, promoting more reproducible science. The following below are the data-set submissions by the researchers to open a gate to future possibilities:

- Ion mobility- Ion mobility is a technique used to separate ionized analytes based on their shape, size, and physicochemical properties. Methods include using an electric field to drive or trap ions in an ion mobility cell and colliding peptides with an inert gas so they don’t break. Higher measured collisional cross section (CCS) is the result of larger peptides being more influenced by collisions. Molecular dynamics models served as the foundation for previous ion mobility prediction techniques. However, new developments have led to the development of several ML and DL methods for peptide and metabolite CCS prediction. ProteomicsML tutorials train a variety of model types, from basic linear models to more intricate nonlinear models, using data from Meier et al. and Puyvelde et al.’s trapping (TIMS) and propulsive ion mobility (TWIMS) experiments.

- Retention time – New multitiered ML-ready data sets have been assembled from the ProteomeTools synthetic peptide library, and retention duration is a critical attribute in predictive proteomics. These data sets are divided into three sizes: large (1 million data points), medium (250,000 data points), and tiny (100,000 data points). The only changed peptide in the data sets is cysteine carbamidomethylation. An extra data set tier has 200,000 oxidized peptides and a mixed data set with 200,000 oxidized and 200,000 unmodified peptides to train models for practical applications. These datasets come with two tutorials explaining how to use them in deep learning models with little need for preprocessing. A thorough tutorial also aligns and integrates retention times from different MaxQuant evidence files, creating a file that is appropriate for machine learning and retention time prediction.

- Fragmentation intensity- Peptide identification involves a complicated issue called fragment ion intensity prediction, for which ProteomicsML offers extensive data sets and courses. The preprocessing procedures used for data fragmentation are intricate and might differ greatly throughout laboratories. Two tutorials have been developed to imitate the MS2PIP data process on a consensus human spectrum library from the National Institute of Standards and Technology, as well as the Prosit data processing approach using ProteomeTools data sets. However, because of the substantial variations in handling and preparation stages, a straightforward format with uniform columns is challenging. There is only one tutorial on ProteomicsML at the moment; however, other processing methods might be added in the future.

- Protein detectability – Most proteins are being identified and measured by modern proteomics techniques; however, some are still unidentified. Properties influencing detectability have been investigated through the application of machine learning (ML). Based on actual observations of proteins that are discovered and those that are not, a proteome’s attributes can be used to train a model. Proteins that should go into the undetected group but are discovered, as well as those with features that should place them in the detected group but do not, can be distinguished using this approach. Based on a 2021 investigation, the Arabidopsis PeptideAtlas data collection offers a thorough examination of the proteome of Arabidopsis thaliana.

Using ProteomeXchange data sets and ML, ProteomicsML is a comprehensive tool for proteomics publishing. Certain usage features, such as retention duration, deep learning, and benchmarking, are labeled on these data sets. If ProteomicsML does not already have an appropriate data set, users can quickly search for and assemble one by adding these tags to the corresponding PRIDE data sets.

Conclusion

ProteomicsML provides tutorials and data sets on different LC-MS peptide properties, making it an extensive resource for MS-based proteomics practitioners. This website gives novices who lack in-depth knowledge of the complete proteome analysis pipeline a place to start and enables researchers to compare novel algorithms to the most advanced models available. The site seeks to support more open and reproducible science in the area and assist the upcoming generation of machine learning practitioners.

Article Source: Reference Paper | The platform is freely available at Website | Contributions to the project could be made through GitHub

Follow Us!

Learn More:

Deotima is a consulting scientific content writing intern at CBIRT. Currently she's pursuing Master's in Bioinformatics at Maulana Abul Kalam Azad University of Technology. As an emerging scientific writer, she is eager to apply her expertise in making intricate scientific concepts comprehensible to individuals from diverse backgrounds. Deotima harbors a particular passion for Structural Bioinformatics and Molecular Dynamics.

[…] Empowering Researchers with ProteomicsML: An Online Oasis of Data Sets and Tutorials for Machine Lea… […]

[…] Empowering Researchers with ProteomicsML: An Online Oasis of Data Sets and Tutorials for Machine Lea… […]