Indian Institute of Science (IISc) researchers have created a novel GPU-based machine learning model to better understand and predict human brain connections across various brain regions.

Image Source: https://iisc.ac.in/events/using-gpus-to-discover-human-brain-connectivity/

The researchers present “Regularized, Accelerated, Linear Fascicle Evaluation (ReAl-LiFE)”, a GPU-based execution of a state-of-the-art streamline pruning algorithm (LiFE), which achieves >100× speedups over previous CPU-based implementations.

The ReAl-LiFE method can quickly analyze the massive volumes of data produced by the human brain’s diffusion magnetic resonance imaging (dMRI) scans. ReAL-LiFE allowed scientists to analyze dMRI data more than 150 times faster than current state-of-the-art methods could.

Devarajan Sridharan, the corresponding author of the study published in the journal Nature Computational Science and an Associate Professor at the Centre for Neuroscience (CNS), IISc, claims that tasks that used to take hours to days can now be finished in seconds to minutes with the help of novel GPU-based machine learning model.

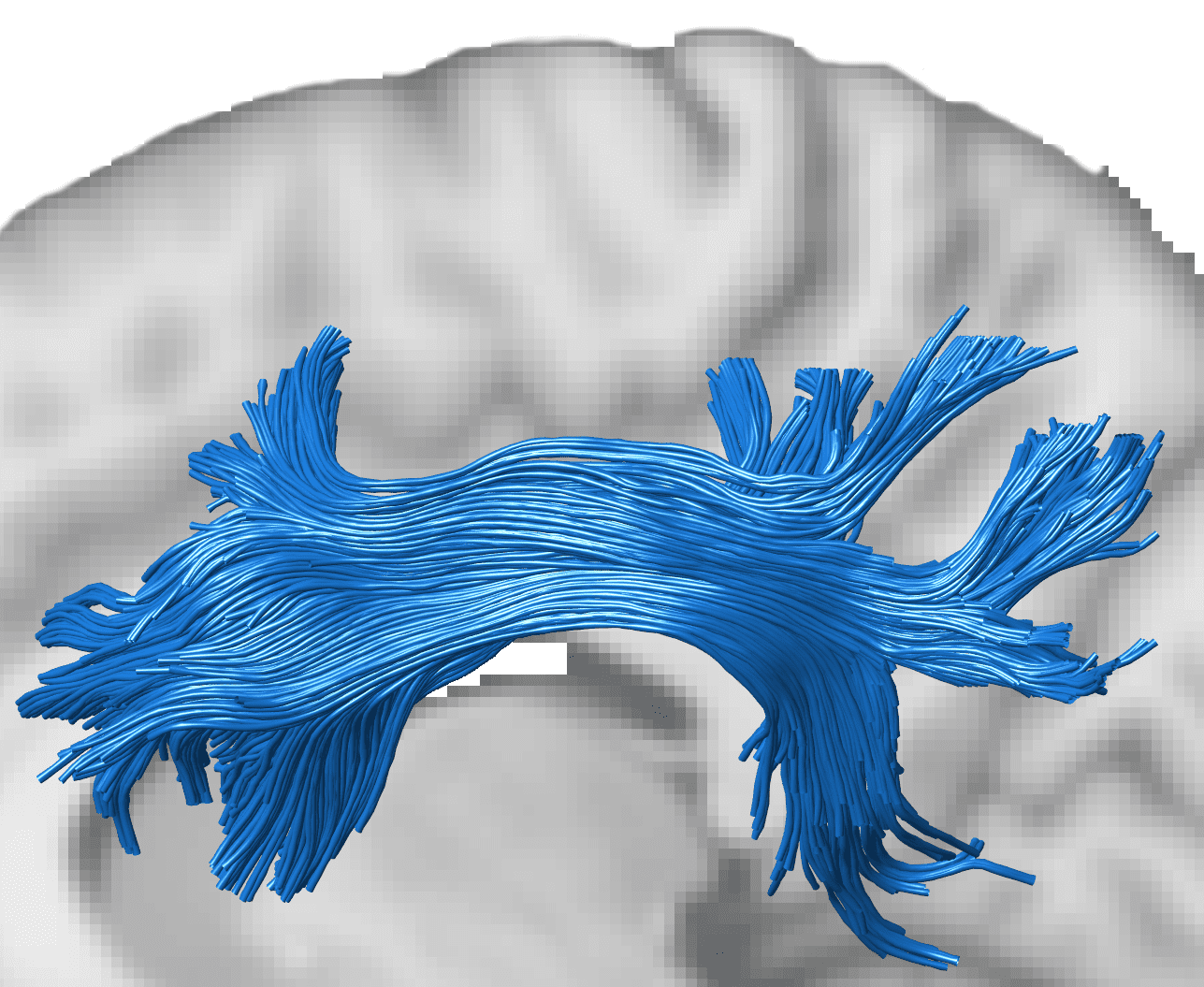

Human Brain Connections

Every second, millions of neurons fire in the brain, creating electrical pulses that move through neural networks from one region of the brain to another via connecting cables or “axons.” These human brain connections are important for computations that the brain carries out. For the large-scale discovery of brain-behavior links understanding brain connectivity is essential, adds Varsha Sreenivasan, a Ph.D. candidate at CNS and the study’s first author. However, traditional methods for studying human brain connections often involve using invasive animal models. On the other hand, dMRI scans offer a non-invasive way to examine human brain connectivity.

The axons (cables) that link the brain’s various regions serve as its information highways. Water molecules travel along with axon bundles in a directed manner along their length because they are fashioned like tubes. With the use of dMRI, researchers can follow this movement and produce a connectome—a detailed map of the brain’s network of fibers.

Unfortunately, identifying these connectomes is not simple. The scans’ data show only the net flow of water molecules at each location in the brain. Consider the water molecules as automobiles. Without knowing anything about the roadways, the only information collected is the direction and speed of the automobiles at each point in time and place. By studying these traffic patterns, our objective is comparable to inferring the networks of roadways, according to Sridharan.

Image Source: https://iisc.ac.in/events/using-gpus-to-discover-human-brain-connectivity/

GPU-based Machine Learning Model

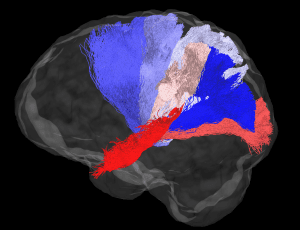

Conventional approaches closely match the expected dMRI signal from the inferred connectome with the actual dMRI signal in order to correctly identify these networks. To perform this optimization, scientists had previously created an algorithm called LiFE (Linear Fascicle Evaluation), but one of its drawbacks was that it depended on conventional Central Processing Units (CPUs), which prolonged computation times.

The performance of LiFE was significantly improved in the latest work by Sridharan’s team, who modified their method to reduce the computational effort required in a number of ways, including by deleting unnecessary connections. The researchers also reconfigured the algorithm to function on specialized electronic processors called Graphics Processing Units (GPUs), the kind seen in high-end gaming computers, allowing them to analyze data at rates 100–150 times quicker than earlier methods.

ReAl-LiFE Algorithm

ReAl-LiFE, the upgraded machine learning algorithm, was also able to anticipate how a test subject in a human environment will act or complete a job. In other words, the team was able to explain differences in 200 participants’ results on behavioral and cognitive tests using the link strengths calculated by the algorithm for each person.

Additionally, such analysis has potential uses in medicine. According to Sreenivasan, large-scale data processing is becoming more and more essential for big-data neuroscience applications, particularly for comprehending normal brain function and brain pathology.

For instance, the team aims to be able to detect early indications of aging or impairment of brain function before they emerge behaviorally in Alzheimer’s patients using the generated connectomes. According to Sridharan, in another study, the researchers demonstrated that an earlier iteration of ReAL-LiFE might outperform other rival algorithms for differentiating individuals with Alzheimer’s disease from healthy controls. Additionally, the GPU-based machine learning approach is extremely versatile and may be applied to solve optimization issues in a variety of different industries.

Story Source: Sreenivasan, V., Kumar, S., Pestilli, F. et al. GPU-accelerated connectome discovery at scale. Nat Comput Sci 2, 298–306 (2022). https://doi.org/10.1038/s43588-022-00250-z

https://iisc.ac.in/events/using-gpus-to-discover-human-brain-connectivity/

Learn More About Bioinformatics:

Top Bioinformatics Books ↗

Learn more to get deeper insights into the field of bioinformatics.

Top Free Online Bioinformatics Courses ↗

Freely available courses to learn each and every aspect of bioinformatics.

Latest Bioinformatics Breakthroughs ↗

Stay updated with the latest discoveries in the field of bioinformatics.

Dr. Tamanna Anwar is a Scientist and Co-founder of the Centre of Bioinformatics Research and Technology (CBIRT). She is a passionate bioinformatics scientist and a visionary entrepreneur. Dr. Tamanna has worked as a Young Scientist at Jawaharlal Nehru University, New Delhi. She has also worked as a Postdoctoral Fellow at the University of Saskatchewan, Canada. She has several scientific research publications in high-impact research journals. Her latest endeavor is the development of a platform that acts as a one-stop solution for all bioinformatics related information as well as developing a bioinformatics news portal to report cutting-edge bioinformatics breakthroughs.